we provide High value Amazon AWS-Certified-Machine-Learning-Specialty exam which are the best for clearing AWS-Certified-Machine-Learning-Specialty test, and to get certified by Amazon AWS Certified Machine Learning - Specialty. The AWS-Certified-Machine-Learning-Specialty Questions & Answers covers all the knowledge points of the real AWS-Certified-Machine-Learning-Specialty exam. Crack your Amazon AWS-Certified-Machine-Learning-Specialty Exam with latest dumps, guaranteed!

Check AWS-Certified-Machine-Learning-Specialty free dumps before getting the full version:

NEW QUESTION 1

A Machine Learning Specialist is developing a custom video recommendation model for an application The dataset used to train this model is very large with millions of data points and is hosted in an Amazon S3 bucket The Specialist wants to avoid loading all of this data onto an Amazon SageMaker notebook instance because it would take hours to move and will exceed the attached 5 GB Amazon EBS volume on the notebook instance.

Which approach allows the Specialist to use all the data to train the model?

- A. Load a smaller subset of the data into the SageMaker notebook and train locall

- B. Confirm that thetraining code is executing and the model parameters seem reasonabl

- C. Initiate a SageMaker training job using the full dataset from the S3 bucket using Pipe input mode.

- D. Launch an Amazon EC2 instance with an AWS Deep Learning AMI and attach the S3 bucket to theinstanc

- E. Train on a small amount of the data to verify the training code and hyperparameter

- F. Go back toAmazon SageMaker and train using the full dataset

- G. Use AWS Glue to train a model using a small subset of the data to confirm that the data will be compatible with Amazon SageMake

- H. Initiate a SageMaker training job using the full dataset from the S3 bucket using Pipe input mode.

- I. Load a smaller subset of the data into the SageMaker notebook and train locall

- J. Confirm that the training code is executing and the model parameters seem reasonabl

- K. Launch an Amazon EC2 instance with an AWS Deep Learning AMI and attach the S3 bucket to train the full dataset.

Answer: A

NEW QUESTION 2

A company has raw user and transaction data stored in AmazonS3 a MySQL database, and Amazon RedShift A Data Scientist needs to perform an analysis by joining the three datasets from Amazon S3, MySQL, and Amazon RedShift, and then calculating the average-of a few selected columns from the joined data

Which AWS service should the Data Scientist use?

- A. Amazon Athena

- B. Amazon Redshift Spectrum

- C. AWS Glue

- D. Amazon QuickSight

Answer: A

NEW QUESTION 3

The chief editor for a product catalog wants the research and development team to build a machine learning system that can be used to detect whether or not individuals in a collection of images are wearing the company's retail brand. The team has a set of training data.

Which machine learning algorithm should the researchers use that BEST meets their requirements?

- A. Latent Dirichlet Allocation (LDA)

- B. Recurrent neural network (RNN)

- C. K-means

- D. Convolutional neural network (CNN)

Answer: D

NEW QUESTION 4

A Machine Learning Specialist trained a regression model, but the first iteration needs optimizing. The Specialist needs to understand whether the model is more frequently overestimating or underestimating the target.

What option can the Specialist use to determine whether it is overestimating or underestimating the target value?

- A. Root Mean Square Error (RMSE)

- B. Residual plots

- C. Area under the curve

- D. Confusion matrix

Answer: B

NEW QUESTION 5

A Machine Learning Specialist is applying a linear least squares regression model to a dataset with 1 000 records and 50 features Prior to training, the ML Specialist notices that two features are perfectly linearly dependent

Why could this be an issue for the linear least squares regression model?

- A. It could cause the backpropagation algorithm to fail during training

- B. It could create a singular matrix during optimization which fails to define a unique solution

- C. It could modify the loss function during optimization causing it to fail during training

- D. It could introduce non-linear dependencies within the data which could invalidate the linear assumptions of the model

Answer: C

NEW QUESTION 6

A Data Scientist is training a multilayer perception (MLP) on a dataset with multiple classes. The target class of interest is unique compared to the other classes within the dataset, but it does not achieve and acceptable recall metric. The Data Scientist has already tried varying the number and size of the MLP’s hidden layers, which has not significantly improved the results. A solution to improve recall must be implemented as quickly as possible.

Which techniques should be used to meet these requirements?

- A. Gather more data using Amazon Mechanical Turk and then retrain

- B. Train an anomaly detection model instead of an MLP

- C. Train an XGBoost model instead of an MLP

- D. Add class weights to the MLP’s loss function and then retrain

Answer: C

NEW QUESTION 7

A manufacturing company wants to use machine learning (ML) to automate quality control in its facilities. The facilities are in remote locations and have limited internet connectivity. The company has 20 of training data that consists of labeled images of defective product parts. The training data is in the corporate on-premises data center.

The company will use this data to train a model for real-time defect detection in new parts as the parts move on a conveyor belt in the facilities. The company needs a solution that minimizes costs for compute infrastructure and that maximizes the scalability of resources for training. The solution also must facilitate the company’s use of an ML model in the low-connectivity environments.

Which solution will meet these requirements?

- A. Move the training data to an Amazon S3 bucke

- B. Train and evaluate the model by using Amazon SageMake

- C. Optimize the model by using SageMaker Ne

- D. Deploy the model on a SageMaker hosting services endpoint.

- E. Train and evaluate the model on premise

- F. Upload the model to an Amazon S3 bucke

- G. Deploy the model on an Amazon SageMaker hosting services endpoint.

- H. Move the training data to an Amazon S3 bucke

- I. Train and evaluate the model by using Amazon SageMake

- J. Optimize the model by using SageMaker Ne

- K. Set up an edge device in the manufacturing facilities with AWS IoT Greengras

- L. Deploy the model on the edge device.

- M. Train the model on premise

- N. Upload the model to an Amazon S3 bucke

- O. Set up an edge device in the manufacturing facilities with AWS IoT Greengras

- P. Deploy the model on the edge device.

Answer: A

NEW QUESTION 8

Amazon Connect has recently been tolled out across a company as a contact call center The solution has been configured to store voice call recordings on Amazon S3

The content of the voice calls are being analyzed for the incidents being discussed by the call operators Amazon Transcribe is being used to convert the audio to text, and the output is stored on Amazon S3

Which approach will provide the information required for further analysis?

- A. Use Amazon Comprehend with the transcribed files to build the key topics

- B. Use Amazon Translate with the transcribed files to train and build a model for the key topics

- C. Use the AWS Deep Learning AMI with Gluon Semantic Segmentation on the transcribed files to train and build a model for the key topics

- D. Use the Amazon SageMaker k-Nearest-Neighbors (kNN) algorithm on the transcribed files to generate a word embeddings dictionary for the key topics

Answer: B

NEW QUESTION 9

A data scientist is working on a public sector project for an urban traffic system. While studying the traffic patterns, it is clear to the data scientist that the traffic behavior at each light is correlated, subject to a small stochastic error term. The data scientist must model the traffic behavior to analyze the traffic patterns and reduce congestion.

How will the data scientist MOST effectively model the problem?

- A. The data scientist should obtain a correlated equilibrium policy by formulating this problem as a multi-agent reinforcement learning problem.

- B. The data scientist should obtain the optimal equilibrium policy by formulating this problem as a single-agent reinforcement learning problem.

- C. Rather than finding an equilibrium policy, the data scientist should obtain accurate predictors of traffic flow by using historical data through a supervised learning approach.

- D. Rather than finding an equilibrium policy, the data scientist should obtain accurate predictors of traffic flow by using unlabeled simulated data representing the new traffic patterns in the city and applying an unsupervised learning approach.

Answer: D

NEW QUESTION 10

A machine learning specialist is running an Amazon SageMaker endpoint using the built-in object detection algorithm on a P3 instance for real-time predictions in a company's production application. When evaluating the model's resource utilization, the specialist notices that the model is using only a fraction of the GPU.

Which architecture changes would ensure that provisioned resources are being utilized effectively?

- A. Redeploy the model as a batch transform job on an M5 instance.

- B. Redeploy the model on an M5 instanc

- C. Attach Amazon Elastic Inference to the instance.

- D. Redeploy the model on a P3dn instance.

- E. Deploy the model onto an Amazon Elastic Container Service (Amazon ECS) cluster using a P3 instance.

Answer: B

Explanation:

https://aws.amazon.com/machine-learning/elastic-inference/

NEW QUESTION 11

A real-estate company is launching a new product that predicts the prices of new houses. The historical data for the properties and prices is stored in .csv format in an Amazon S3 bucket. The data has a header, some categorical fields, and some missing values. The company’s data scientists have used Python with a common open-source library to fill the missing values with zeros. The data scientists have dropped all of the categorical fields and have trained a model by using the open-source linear regression algorithm with the default parameters.

The accuracy of the predictions with the current model is below 50%. The company wants to improve the model performance and launch the new product as soon as possible.

Which solution will meet these requirements with the LEAST operational overhead?

- A. Create a service-linked role for Amazon Elastic Container Service (Amazon ECS) with access to the S3 bucke

- B. Create an ECS cluster that is based on an AWS Deep Learning Containers imag

- C. Write the code to perform the feature engineerin

- D. Train a logistic regression model for predicting the price, pointing to the bucket with the datase

- E. Wait for the training job to complet

- F. Perform the inferences.

- G. Create an Amazon SageMaker notebook with a new IAM role that is associated with the noteboo

- H. Pull the dataset from the S3 bucke

- I. Explore different combinations of feature engineering transformations,regression algorithms, and hyperparameter

- J. Compare all the results in the notebook, and deploy the most accurate configuration in an endpoint for predictions.

- K. Create an IAM role with access to Amazon S3, Amazon SageMaker, and AWS Lambd

- L. Create a training job with the SageMaker built-in XGBoost model pointing to the bucket with the datase

- M. Specify the price as the target featur

- N. Wait for the job to complet

- O. Load the model artifact to a Lambda function for inference on prices of new houses.

- P. Create an IAM role for Amazon SageMaker with access to the S3 bucke

- Q. Create a SageMaker AutoML job with SageMaker Autopilot pointing to the bucket with the datase

- R. Specify the price as the target attribut

- S. Wait for the job to complet

- T. Deploy the best model for predictions.

Answer: A

NEW QUESTION 12

A machine learning (ML) specialist needs to extract embedding vectors from a text series. The goal is to provide a ready-to-ingest feature space for a data scientist to develop downstream ML predictive models. The text consists of curated sentences in English. Many sentences use similar words but in different contexts. There are questions and answers among the sentences, and the embedding space must differentiate between them.

Which options can produce the required embedding vectors that capture word context and sequential QA information? (Choose two.)

- A. Amazon SageMaker seq2seq algorithm

- B. Amazon SageMaker BlazingText algorithm in Skip-gram mode

- C. Amazon SageMaker Object2Vec algorithm

- D. Amazon SageMaker BlazingText algorithm in continuous bag-of-words (CBOW) mode

- E. Combination of the Amazon SageMaker BlazingText algorithm in Batch Skip-gram mode with a custom recurrent neural network (RNN)

Answer: AC

NEW QUESTION 13

A Machine Learning Specialist working for an online fashion company wants to build a data ingestion solution for the company's Amazon S3-based data lake.

The Specialist wants to create a set of ingestion mechanisms that will enable future capabilities comprised of:

• Real-time analytics

• Interactive analytics of historical data

• Clickstream analytics

• Product recommendations

Which services should the Specialist use?

- A. AWS Glue as the data dialog; Amazon Kinesis Data Streams and Amazon Kinesis Data Analytics for real-time data insights; Amazon Kinesis Data Firehose for delivery to Amazon ES for clickstream analytics; Amazon EMR to generate personalized product recommendations

- B. Amazon Athena as the data catalog; Amazon Kinesis Data Streams and Amazon Kinesis Data Analytics for near-realtime data insights; Amazon Kinesis Data Firehose for clickstream analytics; AWS Glue to generate personalized product recommendations

- C. AWS Glue as the data catalog; Amazon Kinesis Data Streams and Amazon Kinesis Data Analytics for historical data insights; Amazon Kinesis Data Firehose for delivery to Amazon ES for clickstream analytics; Amazon EMR to generate personalized product recommendations

- D. Amazon Athena as the data catalog; Amazon Kinesis Data Streams and Amazon Kinesis Data Analytics for historical data insights; Amazon DynamoDB streams for clickstream analytics; AWS Glue to generate personalized product recommendations

Answer: A

NEW QUESTION 14

A web-based company wants to improve its conversion rate on its landing page Using a large historical dataset of customer visits, the company has repeatedly trained a multi-class deep learning network algorithm on Amazon SageMaker However there is an overfitting problem training data shows 90% accuracy in predictions, while test data shows 70% accuracy only

The company needs to boost the generalization of its model before deploying it into production to maximize conversions of visits to purchases

Which action is recommended to provide the HIGHEST accuracy model for the company's test and validation data?

- A. Increase the randomization of training data in the mini-batches used in training.

- B. Allocate a higher proportion of the overall data to the training dataset

- C. Apply L1 or L2 regularization and dropouts to the training.

- D. Reduce the number of layers and units (or neurons) from the deep learning network.

Answer: C

Explanation:

If this is a ComputerVision problem augmentation can help and we may consider A an option. However in analyzing customer historic data, there is no easy way to increase randomization in training. If you go deep into modelling and coding. When you build model with tensorflow/pytorch, most of the time the trainloader is already sampling in data in random manner (with shuffle enable). What we usually do to reduce overfitting is by adding dropout.

https://docs.aws.amazon.com/machine-learning/latest/dg/model-fit-underfitting-vs-overfitting.html

NEW QUESTION 15

A Machine Learning Specialist needs to be able to ingest streaming data and store it in Apache Parquet files for exploration and analysis. Which of the following services would both ingest and store this data in the correct format?

- A. AWSDMS

- B. Amazon Kinesis Data Streams

- C. Amazon Kinesis Data Firehose

- D. Amazon Kinesis Data Analytics

Answer: C

NEW QUESTION 16

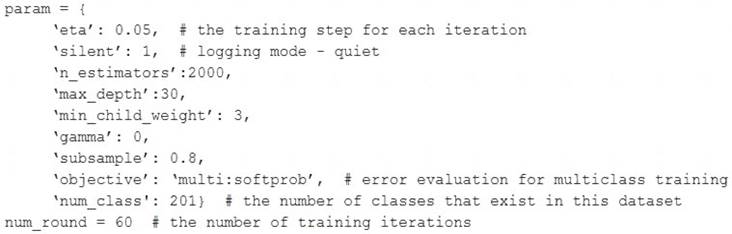

A Machine Learning Specialist is assigned to a Fraud Detection team and must tune an XGBoost model, which is working appropriately for test data. However, with unknown data, it is not working as expected. The existing parameters are provided as follows.

Which parameter tuning guidelines should the Specialist follow to avoid overfitting?

- A. Increase the max_depth parameter value.

- B. Lower the max_depth parameter value.

- C. Update the objective to binary:logistic.

- D. Lower the min_child_weight parameter value.

Answer: B

NEW QUESTION 17

......

P.S. Easily pass AWS-Certified-Machine-Learning-Specialty Exam with 307 Q&As Allfreedumps.com Dumps & pdf Version, Welcome to Download the Newest Allfreedumps.com AWS-Certified-Machine-Learning-Specialty Dumps: https://www.allfreedumps.com/AWS-Certified-Machine-Learning-Specialty-dumps.html (307 New Questions)