Your success in Amazon AWS-Certified-Security-Specialty is our sole target and we develop all our AWS-Certified-Security-Specialty braindumps in a way that facilitates the attainment of this target. Not only is our AWS-Certified-Security-Specialty study material the best you can find, it is also the most detailed and the most updated. AWS-Certified-Security-Specialty Practice Exams for Amazon AWS-Certified-Security-Specialty are written to the highest standards of technical accuracy.

Amazon AWS-Certified-Security-Specialty Free Dumps Questions Online, Read and Test Now.

NEW QUESTION 1

An organization must establish the ability to delete an IAM KMS Customer Master Key (CMK) within a

24- hour timeframe to keep it from being used for encrypt or decrypt operations Which of tne following actions will address this requirement?

- A. Manually rotate a key within KMS to create a new CMK immediately

- B. Use the KMS import key functionality to execute a delete key operation

- C. Use the schedule key deletion function within KMS to specify the minimum wait period for deletion

- D. Change the KMS CMK alias to immediately prevent any services from using the CMK.

Answer: C

Explanation:

the schedule key deletion function within KMS allows you to specify a waiting period before deleting a customer master key (CMK)4. The minimum waiting period is 7 days and the maximum is 30 days5. This function prevents the CMK from being used for encryption or decryption operations during the waiting period4. The other options are either invalid or ineffective for deleting a CMK within a 24-hour timeframe.

NEW QUESTION 2

A company has an AWS account that includes an Amazon S3 bucket. The S3 bucket uses server-side encryption with AWS KMS keys (SSE-KMS) to encrypt all the objects at rest by using a customer managed key. The S3 bucket does not have a bucket policy.

An IAM role in the same account has an IAM policy that allows s3 List* and s3 Get' permissions for the S3 bucket. When the IAM role attempts to access an object in the S3 bucket the role receives an access denied message.

Why does the IAM rote not have access to the objects that are in the S3 bucket?

- A. The IAM rote does not have permission to use the KMS CreateKey operation.

- B. The S3 bucket lacks a policy that allows access to the customer managed key that encrypts the objects.

- C. The IAM rote does not have permission to use the customer managed key that encrypts the objects that are in the S3 bucket.

- D. The ACL of the S3 objects does not allow read access for the objects when the objects ace encrypted at rest.

Answer: C

Explanation:

When using server-side encryption with AWS KMS keys (SSE-KMS), the requester must have both Amazon S3 permissions and AWS KMS permissions to access the objects. The Amazon S3 permissions are for the bucket and object operations, such as s3:ListBucket and s3:GetObject. The AWS KMS permissions are for the key operations, such as kms:GenerateDataKey and kms:Decrypt. In this case, the IAM role has the necessary Amazon S3 permissions, but not the AWS KMS permissions to use the customer managed key that encrypts the objects. Therefore, the IAM role receives an access denied message when trying to access the objects. Verified References: https://docs.aws.amazon.com/AmazonS3/latest/userguide/troubleshoot-403-errors.html

https://docs.aws.amazon.com/AmazonS3/latest/userguide/troubleshoot-403-errors.html  https://repost.aws/knowledge-center/s3-access-denied-error-kms

https://repost.aws/knowledge-center/s3-access-denied-error-kms https://repost.aws/knowledge-center/cross-account-access-denied-error-s3

https://repost.aws/knowledge-center/cross-account-access-denied-error-s3

NEW QUESTION 3

A company is evaluating its security posture. In the past, the company has observed issues with specific hosts and host header combinations that affected

the company's business. The company has configured AWS WAF web ACLs as an initial step to mitigate these issues.

The company must create a log analysis solution for the AWS WAF web ACLs to monitor problematic activity. The company wants to process all the AWS WAF logs in a central location. The company must have the ability to filter out requests based on specific hosts.

A security engineer starts to enable access logging for the AWS WAF web ACLs.

What should the security engineer do next to meet these requirements with the MOST operational efficiency?

- A. Specify Amazon Redshift as the destination for the access log

- B. Deploy the Amazon Athena Redshift connecto

- C. Use Athena to query the data from Amazon Redshift and to filter the logs by host.

- D. Specify Amazon CloudWatch as the destination for the access log

- E. Use Amazon CloudWatch Logs Insights to design a query to filter the logs by host.

- F. Specify Amazon CloudWatch as the destination for the access log

- G. Export the CloudWatch logs to an Amazon S3 bucke

- H. Use Amazon Athena to query the logs and to filter the logs by host.

- I. Specify Amazon CloudWatch as the destination for the access log

- J. Use Amazon Redshift Spectrum to query the logs and to filter the logs by host.

Answer: C

Explanation:

The correct answer is C. Specify Amazon CloudWatch as the destination for the access logs. Export the CloudWatch logs to an Amazon S3 bucket. Use Amazon Athena to query the logs and to filter the logs by host.

According to the AWS documentation1, AWS WAF offers logging for the traffic that your web ACLs analyze. The logs include information such as the time that AWS WAF received the request from your protected AWS resource, detailed information about the request, and the action setting for the rule that the request matched. You can send your logs to an Amazon CloudWatch Logs log group, an Amazon Simple Storage Service (Amazon S3) bucket, or an Amazon Kinesis Data Firehose.

To create a log analysis solution for the AWS WAF web ACLs, you can use Amazon Athena, which is an interactive query service that makes it easy to analyze data in Amazon S3 using standard SQL2. You can use Athena to query and filter the AWS WAF logs by host or any other criteria. Athena is serverless, so there is no infrastructure to manage, and you pay only for the queries that you run.

To use Athena with AWS WAF logs, you need to export the CloudWatch logs to an S3 bucket. You can do this by creating a subscription filter that sends your log events to a Kinesis Data Firehose delivery stream, which then delivers the data to an S3 bucket3. Alternatively, you can use AWS DMS to migrate your CloudWatch logs to S34.

After you have exported your CloudWatch logs to S3, you can create a table in Athena that points to your S3 bucket and use the AWS service log format that matches your log schema5. For example, if you are using JSON format for your AWS WAF logs, you can use the AWSJSONSerDe serde. Then you can run SQL queries on your Athena table and filter the results by host or any other field in your log data.

Therefore, this solution meets the requirements of creating a log analysis solution for the AWS WAF web ACLs with the most operational efficiency. This solution does not require setting up any additional infrastructure or services, and it leverages the existing capabilities of CloudWatch, S3, and Athena.

The other options are incorrect because: A. Specifying Amazon Redshift as the destination for the access logs is not possible, because AWS WAF does not support sending logs directly to Redshift. You would need to use an intermediate service such as Kinesis Data Firehose or AWS DMS to load the data from CloudWatch or S3 to Redshift. Deploying the Amazon Athena Redshift connector is not necessary, because you can query Redshift data directly from Athena without using a connector6. This solution would also incur additional costs and operational overhead of managing a Redshift cluster.

A. Specifying Amazon Redshift as the destination for the access logs is not possible, because AWS WAF does not support sending logs directly to Redshift. You would need to use an intermediate service such as Kinesis Data Firehose or AWS DMS to load the data from CloudWatch or S3 to Redshift. Deploying the Amazon Athena Redshift connector is not necessary, because you can query Redshift data directly from Athena without using a connector6. This solution would also incur additional costs and operational overhead of managing a Redshift cluster. B. Specifying Amazon CloudWatch as the destination for the access logs is possible, but using Amazon CloudWatch Logs Insights to design a query to filter the logs by host is not efficient or scalable. CloudWatch Logs Insights is a feature that enables you to interactively search and analyze your log data in CloudWatch Logs7. However, CloudWatch Logs Insights has some limitations, such as a maximum query duration of 20 minutes, a maximum of 20 log groups per query, and a maximum retention period of 24 months8. These limitations may affect your ability to perform complex and long-running analysis on your AWS WAF logs.

B. Specifying Amazon CloudWatch as the destination for the access logs is possible, but using Amazon CloudWatch Logs Insights to design a query to filter the logs by host is not efficient or scalable. CloudWatch Logs Insights is a feature that enables you to interactively search and analyze your log data in CloudWatch Logs7. However, CloudWatch Logs Insights has some limitations, such as a maximum query duration of 20 minutes, a maximum of 20 log groups per query, and a maximum retention period of 24 months8. These limitations may affect your ability to perform complex and long-running analysis on your AWS WAF logs. D. Specifying Amazon CloudWatch as the destination for the access logs is possible, but using Amazon Redshift Spectrum to query the logs and filter them by host is not efficient or cost-effective. Redshift Spectrum is a feature of Amazon Redshift that enables you to run queries against exabytes of data in S3

D. Specifying Amazon CloudWatch as the destination for the access logs is possible, but using Amazon Redshift Spectrum to query the logs and filter them by host is not efficient or cost-effective. Redshift Spectrum is a feature of Amazon Redshift that enables you to run queries against exabytes of data in S3

without loading or transforming any data9. However, Redshift Spectrum requires a Redshift cluster to process the queries, which adds additional costs and operational overhead. Redshift Spectrum also charges you based on the number of bytes scanned by each query, which can be expensive if you have large volumes of log data10.

References:

1: Logging AWS WAF web ACL traffic - Amazon Web Services 2: What Is Amazon Athena? - Amazon Athena 3: Streaming CloudWatch Logs Data to Amazon S3 - Amazon CloudWatch Logs 4: Migrate data from CloudWatch Logs using AWS Database Migration Service - AWS Database Migration Service 5: Querying AWS service logs - Amazon Athena 6: Querying data from Amazon Redshift - Amazon Athena 7: Analyzing log data with CloudWatch Logs Insights - Amazon CloudWatch Logs 8: CloudWatch Logs Insights quotas - Amazon CloudWatch 9: Querying external data using Amazon Redshift Spectrum - Amazon Redshift 10: Amazon Redshift Spectrum pricing - Amazon Redshift

NEW QUESTION 4

A developer 15 building a serverless application hosted on IAM that uses Amazon Redshift in a data store. The application has separate modules for read/write and read-only functionality. The modules need their own database users tor compliance reasons.

Which combination of steps should a security engineer implement to grant appropriate access' (Select TWO )

- A. Configure cluster security groups for each application module to control access to database users that are required for read-only and read/write.

- B. Configure a VPC endpoint for Amazon Redshift Configure an endpoint policy that maps database users to each application module, and allow access to the tables that are required for read-only and read/write

- C. Configure an IAM poky for each module Specify the ARN of an Amazon Redshift database user that allows the GetClusterCredentials API call

- D. Create focal database users for each module

- E. Configure an IAM policy for each module Specify the ARN of an IAM user that allows the GetClusterCredentials API call

Answer: CD

Explanation:

To grant appropriate access to the application modules, the security engineer should do the following: Configure an IAM policy for each module. Specify the ARN of an Amazon Redshift database user that allows the GetClusterCredentials API call. This allows the application modules to use temporary credentials to access the database with the permissions of the specified user.

Configure an IAM policy for each module. Specify the ARN of an Amazon Redshift database user that allows the GetClusterCredentials API call. This allows the application modules to use temporary credentials to access the database with the permissions of the specified user. Create local database users for each module. This allows the security engineer to create separate users for read/write and read-only functionality, and to assign them different privileges on the database tables.

Create local database users for each module. This allows the security engineer to create separate users for read/write and read-only functionality, and to assign them different privileges on the database tables.

NEW QUESTION 5

A company is hosting a web application on Amazon EC2 instances behind an Application Load Balancer (ALB). The application has become the target of a DoS attack. Application logging shows that requests are coming from small number of client IP addresses, but the addresses change regularly.

The company needs to block the malicious traffic with a solution that requires the least amount of ongoing effort.

Which solution meets these requirements?

- A. Create an AWS WAF rate-based rule, and attach it to the ALB.

- B. Update the security group that is attached to the ALB to block the attacking IP addresses.

- C. Update the ALB subnet's network ACL to block the attacking client IP addresses.

- D. Create a AWS WAF rate-based rule, and attach it to the security group of the EC2 instances.

Answer: A

NEW QUESTION 6

A security engineer is designing an IAM policy for a script that will use the AWS CLI. The script currently assumes an IAM role that is attached to three AWS managed IAM policies: AmazonEC2FullAccess, AmazonDynamoDBFullAccess, and Ama-zonVPCFullAccess.

The security engineer needs to construct a least privilege IAM policy that will replace the AWS managed IAM policies that are attached to this role.

Which solution will meet these requirements in the MOST operationally efficient way?

- A. In AWS CloudTrail, create a trail for management event

- B. Run the script with the existing AWS managed IAM policie

- C. Use IAM Access Analyzer to generate a new IAM policy that is based on access activity in the trai

- D. Replace the existing AWS managed IAM policies with the generated IAM poli-cy for the role.

- E. Remove the existing AWS managed IAM policies from the rol

- F. Attach the IAM Access Analyzer Role Policy Generator to the rol

- G. Run the scrip

- H. Return to IAM Access Analyzer and generate a least privilege IAM polic

- I. Attach the new IAM policy to the role.

- J. Create an account analyzer in IAM Access Analyze

- K. Create an archive rule that has a filter that checks whether the PrincipalArn value matches the ARN of the rol

- L. Run the scrip

- M. Remove the existing AWS managed IAM poli-cies from the role.

- N. In AWS CloudTrail, create a trail for management event

- O. Remove the exist-ing AWS managed IAM policies from the rol

- P. Run the scrip

- Q. Find the au-thorization failure in the trail event that is associated with the scrip

- R. Create a new IAM policy that includes the action and resource that caused the authorization failur

- S. Repeat the process until the script succeed

- T. Attach the new IAM policy to the role.

Answer: A

NEW QUESTION 7

A security engineer needs to create an IAM Key Management Service <IAM KMS) key that will De used to encrypt all data stored in a company’s Amazon S3 Buckets in the us-west-1 Region. The key will use

server-side encryption. Usage of the key must be limited to requests coming from Amazon S3 within the company's account.

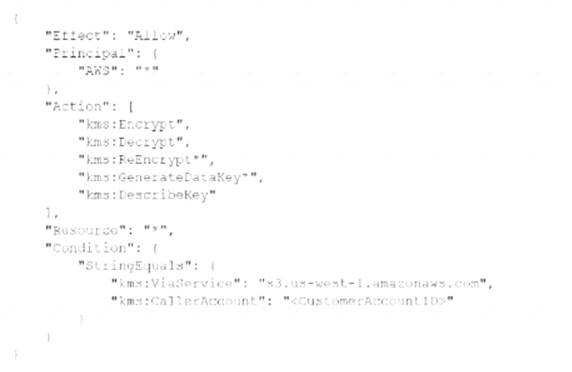

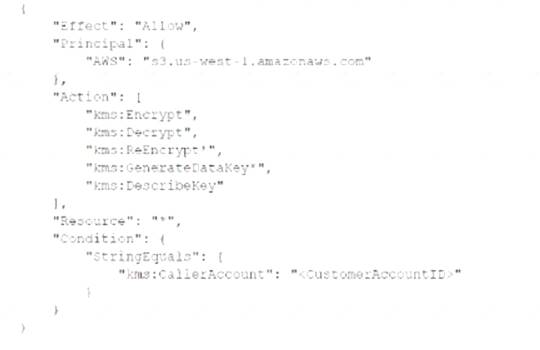

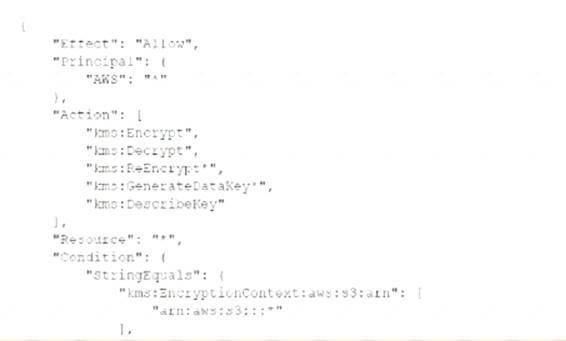

Which statement in the KMS key policy will meet these requirements?

A)

B)

C)

- A. Option A

- B. Option B

- C. Option C

Answer: A

NEW QUESTION 8

A company has several workloads running on AWS. Employees are required to authenticate using on-premises ADFS and SSO to access the AWS Management

Console. Developers migrated an existing legacy web application to an Amazon EC2 instance. Employees need to access this application from anywhere on the internet, but currently, there is no authentication system built into the application.

How should the Security Engineer implement employee-only access to this system without changing the application?

- A. Place the application behind an Application Load Balancer (ALB). Use Amazon Cognito as authentication for the AL

- B. Define a SAML-based Amazon Cognito user pool and connect it to ADFS.

- C. Implement AWS SSO in the master account and link it to ADFS as an identity provide

- D. Define the EC2 instance as a managed resource, then apply an IAM policy on the resource.

- E. Define an Amazon Cognito identity pool, then install the connector on the Active Directory serve

- F. Use the Amazon Cognito SDK on the application instance to authenticate the employees using their Active Directory user names and passwords.

- G. Create an AWS Lambda custom authorizer as the authenticator for a reverse proxy on Amazon EC2.Ensure the security group on Amazon EC2 only allows access from the Lambda function.

Answer: A

Explanation:

https://docs.aws.amazon.com/elasticloadbalancing/latest/application/listener-authenticate-users.html

NEW QUESTION 9

A company is using AWS WAF to protect a customized public API service that is based on Amazon EC2 instances. The API uses an Application Load Balancer.

The AWS WAF web ACL is configured with an AWS Managed Rules rule group. After a software upgrade to the API and the client application, some types of requests are no longer working and are causing application stability issues. A security engineer discovers that AWS WAF logging is not turned on for the web ACL.

The security engineer needs to immediately return the application to service, resolve the issue, and ensure that logging is not turned off in the future. The security engineer turns on logging for the web ACL and specifies Amazon Cloud-Watch Logs as the destination.

Which additional set of steps should the security engineer take to meet the re-quirements?

- A. Edit the rules in the web ACL to include rules with Count action

- B. Review the logs to determine which rule is blocking the reques

- C. Modify the IAM policy of all AWS WAF administrators so that they cannot remove the log-ging configuration for any AWS WAF web ACLs.

- D. Edit the rules in the web ACL to include rules with Count action

- E. Review the logs to determine which rule is blocking the reques

- F. Modify the AWS WAF resource policy so that AWS WAF administrators cannot remove the log-ging configuration for any AWS WAF web ACLs.

- G. Edit the rules in the web ACL to include rules with Count and Challenge action

- H. Review the logs to determine which rule is blocking the reques

- I. Modify the AWS WAF resource policy so that AWS WAF administrators cannot remove the logging configuration for any AWS WAF web ACLs.

- J. Edit the rules in the web ACL to include rules with Count and Challenge action

- K. Review the logs to determine which rule is blocking the reques

- L. Modify the IAM policy of all AWS WAF administrators so that they cannot remove the logging configuration for any AWS WAF web ACLs.

Answer: A

Explanation:

This answer is correct because it meets the requirements of returning the application to service, resolving the issue, and ensuring that logging is not turned off in the future. By editing the rules in the web ACL to include rules with Count actions, the security engineer can test the effect of each rule without blocking or allowing requests. By reviewing the logs, the security engineer can identify which rule is causing the problem and modify or delete it accordingly. By modifying the IAM policy of all AWS WAF administrators, the security engineer can restrict their permissions to prevent them from removing the logging configuration for any AWS WAF web ACLs.

NEW QUESTION 10

A company has several petabytes of data. The company must preserve this data for 7 years to comply with regulatory requirements. The company's compliance team asks a security officer to develop a strategy that will prevent anyone from changing or deleting the data.

Which solution will meet this requirement MOST cost-effectively?

- A. Create an Amazon S3 bucke

- B. Configure the bucket to use S3 Object Lock in compliance mod

- C. Upload the data to the bucke

- D. Create a resource-based bucket policy that meets all the regulatory requirements.

- E. Create an Amazon S3 bucke

- F. Configure the bucket to use S3 Object Lock in governance mod

- G. Upload the data to the bucke

- H. Create a user-based IAM policy that meets all the regulatory requirements.

- I. Create a vault in Amazon S3 Glacie

- J. Create a Vault Lock policy in S3 Glacier that meets all the regulatory requirement

- K. Upload the data to the vault.

- L. Create an Amazon S3 bucke

- M. Upload the data to the bucke

- N. Use a lifecycle rule to transition the data to a vault in S3 Glacie

- O. Create a Vault Lock policy that meets all the regulatory requirements.

Answer: C

Explanation:

To preserve the data for 7 years and prevent anyone from changing or deleting it, the security officer needs to use a service that can store the data securely and enforce compliance controls. The most cost-effective way to do this is to use Amazon S3 Glacier, which is a low-cost storage service for data archiving and long-term backup. S3 Glacier allows you to create a vault, which is a container for storing archives. Archives are any data such as photos, videos, or documents that you want to store durably and reliably.

S3 Glacier also offers a feature called Vault Lock, which helps you to easily deploy and enforce compliance controls for individual vaults with a Vault Lock policy. You can specify controls such as “write once read many” (WORM) in a Vault Lock policy and lock the policy from future edits. Once a Vault Lock policy is locked, the policy can no longer be changed or deleted. S3 Glacier enforces the controls set in the Vault Lock policy to help achieve your compliance objectives. For example, you can use Vault Lock policies to enforce data retention by denying deletes for a specified period of time.

To use S3 Glacier and Vault Lock, the security officer needs to follow these steps: Create a vault in S3 Glacier using the AWS Management Console, AWS Command Line Interface (AWS CLI), or AWS SDKs.

Create a vault in S3 Glacier using the AWS Management Console, AWS Command Line Interface (AWS CLI), or AWS SDKs. Create a Vault Lock policy in S3 Glacier that meets all the regulatory requirements using the IAM policy language. The policy can include conditions such as aws:CurrentTime or aws:SecureTransport to further restrict access to the vault.

Create a Vault Lock policy in S3 Glacier that meets all the regulatory requirements using the IAM policy language. The policy can include conditions such as aws:CurrentTime or aws:SecureTransport to further restrict access to the vault. Initiate the lock by attaching the Vault Lock policy to the vault, which sets the lock to an in-progress state and returns a lock ID. While the policy is in the in-progress state, you have 24 hours to validate

Initiate the lock by attaching the Vault Lock policy to the vault, which sets the lock to an in-progress state and returns a lock ID. While the policy is in the in-progress state, you have 24 hours to validate

your Vault Lock policy before the lock ID expires. To prevent your vault from exiting the in-progress state, you must complete the Vault Lock process within these 24 hours. Otherwise, your Vault Lock policy will be deleted. Use the lock ID to complete the lock process. If the Vault Lock policy doesn’t work as expected, you can stop the Vault Lock process and restart from the beginning.

Use the lock ID to complete the lock process. If the Vault Lock policy doesn’t work as expected, you can stop the Vault Lock process and restart from the beginning. Upload the data to the vault using either direct upload or multipart upload methods. For more information about S3 Glacier and Vault Lock, see S3 Glacier Vault Lock.

Upload the data to the vault using either direct upload or multipart upload methods. For more information about S3 Glacier and Vault Lock, see S3 Glacier Vault Lock.

The other options are incorrect because: Option A is incorrect because creating an Amazon S3 bucket and configuring it to use S3 Object Lock in compliance mode will not prevent anyone from changing or deleting the data. S3 Object Lock is a feature that allows you to store objects using a WORM model in S3. You can apply two types of object locks: retention periods and legal holds. A retention period specifies a fixed period of time during which an object remains locked. A legal hold is an indefinite lock on an object until it is removed. However, S3 Object Lock only prevents objects from being overwritten or deleted by any user, including the root user in your AWS account. It does not prevent objects from being modified by other means, such as changing their metadata or encryption settings. Moreover, S3 Object Lock requires that you enable versioning on your bucket, which will incur additional storage costs for storing multiple versions of an object.

Option A is incorrect because creating an Amazon S3 bucket and configuring it to use S3 Object Lock in compliance mode will not prevent anyone from changing or deleting the data. S3 Object Lock is a feature that allows you to store objects using a WORM model in S3. You can apply two types of object locks: retention periods and legal holds. A retention period specifies a fixed period of time during which an object remains locked. A legal hold is an indefinite lock on an object until it is removed. However, S3 Object Lock only prevents objects from being overwritten or deleted by any user, including the root user in your AWS account. It does not prevent objects from being modified by other means, such as changing their metadata or encryption settings. Moreover, S3 Object Lock requires that you enable versioning on your bucket, which will incur additional storage costs for storing multiple versions of an object. Option B is incorrect because creating an Amazon S3 bucket and configuring it to use S3 Object Lock in governance mode will not prevent anyone from changing or deleting the data. S3 Object Lock in governance mode works similarly to compliance mode, except that users with specific IAM permissions can change or delete objects that are locked. This means that users who have s3:BypassGovernanceRetention permission can remove retention periods or legal holds from objects and overwrite or delete them before they expire. This option does not provide strong enforcement for compliance controls as required by the regulatory requirements.

Option B is incorrect because creating an Amazon S3 bucket and configuring it to use S3 Object Lock in governance mode will not prevent anyone from changing or deleting the data. S3 Object Lock in governance mode works similarly to compliance mode, except that users with specific IAM permissions can change or delete objects that are locked. This means that users who have s3:BypassGovernanceRetention permission can remove retention periods or legal holds from objects and overwrite or delete them before they expire. This option does not provide strong enforcement for compliance controls as required by the regulatory requirements. Option D is incorrect because creating an Amazon S3 bucket and using a lifecycle rule to transition the data to a vault in S3 Glacier will not prevent anyone from changing or deleting the data. Lifecycle rules are actions that Amazon S3 automatically performs on objects during their lifetime. You can use lifecycle rules to transition objects between storage classes or expire them after a certain period of time. However, lifecycle rules do not apply any compliance controls on objects or prevent them from being modified or deleted by users. Moreover, transitioning objects from S3 to S3 Glacier using lifecycle rules will incur additional charges for retrieval requests and data transfers.

Option D is incorrect because creating an Amazon S3 bucket and using a lifecycle rule to transition the data to a vault in S3 Glacier will not prevent anyone from changing or deleting the data. Lifecycle rules are actions that Amazon S3 automatically performs on objects during their lifetime. You can use lifecycle rules to transition objects between storage classes or expire them after a certain period of time. However, lifecycle rules do not apply any compliance controls on objects or prevent them from being modified or deleted by users. Moreover, transitioning objects from S3 to S3 Glacier using lifecycle rules will incur additional charges for retrieval requests and data transfers.

NEW QUESTION 11

A company manages three separate IAM accounts for its production, development, and test environments, Each Developer is assigned a unique IAM user under the development account. A new application hosted on an Amazon EC2 instance in the developer account requires read access to the archived documents stored in an Amazon S3 bucket in the production account.

How should access be granted?

- A. Create an IAM role in the production account and allow EC2 instances in the development account to assume that role using the trust polic

- B. Provide read access for the required S3 bucket to this role.

- C. Use a custom identity broker to allow Developer IAM users to temporarily access the S3 bucket.

- D. Create a temporary IAM user for the application to use in the production account.

- E. Create a temporary IAM user in the production account and provide read access to Amazon S3.Generate the temporary IAM user's access key and secret key and store these on the EC2 instance used by the application in the development account.

Answer: A

Explanation:

https://IAM.amazon.com/premiumsupport/knowledge-center/cross-account-access-s3/

NEW QUESTION 12

A company has a single AWS account and uses an Amazon EC2 instance to test application code. The company recently discovered that the instance was compromised. The instance was serving up malware. The analysis of the instance showed that the instance was compromised 35 days ago.

A security engineer must implement a continuous monitoring solution that automatically notifies the company’s security team about compromised instances through an email distribution list for high severity findings. The security engineer must implement the solution as soon as possible.

Which combination of steps should the security engineer take to meet these requirements? (Choose three.)

- A. Enable AWS Security Hub in the AWS account.

- B. Enable Amazon GuardDuty in the AWS account.

- C. Create an Amazon Simple Notification Service (Amazon SNS) topi

- D. Subscribe the security team’s email distribution list to the topic.

- E. Create an Amazon Simple Queue Service (Amazon SQS) queu

- F. Subscribe the security team’s email distribution list to the queue.

- G. Create an Amazon EventBridge (Amazon CloudWatch Events) rule for GuardDuty findings of high severit

- H. Configure the rule to publish a message to the topic.

- I. Create an Amazon EventBridge (Amazon CloudWatch Events) rule for Security Hub findings of high severit

- J. Configure the rule to publish a message to the queue.

Answer: BCE

NEW QUESTION 13

A company is implementing a new application in a new IAM account. A VPC and subnets have been created for the application. The application has been peered to an existing VPC in another account in the same IAM Region for database access. Amazon EC2 instances will regularly be created and terminated in the application VPC, but only some of them will need access to the databases in the peered VPC over TCP port 1521. A security engineer must ensure that only the EC2 instances that need access to the databases can access them through the network.

How can the security engineer implement this solution?

- A. Create a new security group in the database VPC and create an inbound rule that allows all traffic from the IP address range of the application VP

- B. Add a new network ACL rule on the database subnet

- C. Configure the rule to TCP port 1521 from the IP address range of the application VP

- D. Attach the new security group to the database instances that the application instances need to access.

- E. Create a new security group in the application VPC with an inbound rule that allows the IP address range of the database VPC over TCP port 1521. Create a new security group in the database VPC with an inbound rule that allows the IP address range of the application VPC over port 1521. Attach the new security group to the database instances and the application instances that need database access.

- F. Create a new security group in the application VPC with no inbound rule

- G. Create a new security group in the database VPC with an inbound rule that allows TCP port 1521 from the new application security group in the application VP

- H. Attach the application security group to the application instances that need database access, and attach the database security group to the database instances.

- I. Create a new security group in the application VPC with an inbound rule that allows the IP address range of the database VPC over TCP port 1521. Add a new network ACL rule on the database subnet

- J. Configure the rule to allow all traffic from the IP address range of the application VP

- K. Attach the new security group to the application instances that need database access.

Answer: C

NEW QUESTION 14

A company is using IAM Secrets Manager to store secrets for its production Amazon RDS database. The Security Officer has asked that secrets be rotated every 3 months. Which solution would allow the company to securely rotate the secrets? (Select TWO.)

- A. Place the RDS instance in a public subnet and an IAM Lambda function outside the VP

- B. Schedule the Lambda function to run every 3 months to rotate the secrets.

- C. Place the RDS instance in a private subnet and an IAM Lambda function inside the VPC in the private subne

- D. Configure the private subnet to use a NAT gatewa

- E. Schedule the Lambda function to run every 3 months to rotate the secrets.

- F. Place the RDS instance in a private subnet and an IAM Lambda function outside the VP

- G. Configure the private subnet to use an internet gatewa

- H. Schedule the Lambda function to run every 3 months lo rotate the secrets.

- I. Place the RDS instance in a private subnet and an IAM Lambda function inside the VPC in the private subne

- J. Schedule the Lambda function to run quarterly to rotate the secrets.

- K. Place the RDS instance in a private subnet and an IAM Lambda function inside the VPC in the private subne

- L. Configure a Secrets Manager interface endpoin

- M. Schedule the Lambda function to run every 3 months to rotate the secrets.

Answer: BE

Explanation:

these are the solutions that can securely rotate the secrets for the production RDS database using Secrets Manager. Secrets Manager is a service that helps you manage secrets such as database credentials, API keys, and passwords. You can use Secrets Manager to rotate secrets automatically by using a Lambda function that runs on a schedule. The Lambda function needs to have access to both the RDS instance and the Secrets Manager service. Option B places the RDS instance in a private subnet and the Lambda function in the same VPC in another private subnet. The private subnet with the Lambda function needs to use a NAT gateway to access Secrets Manager over the internet. Option E places the RDS instance and the Lambda function in the same private subnet and configures a Secrets Manager interface endpoint, which is a private connection between the VPC and Secrets Manager. The other options are either insecure or incorrect for rotating secrets using Secrets Manager.

NEW QUESTION 15

An application is running on an Amazon EC2 instance that has an IAM role attached. The IAM role provides access to an AWS Key Management Service (AWS KMS) customer managed key and an Amazon S3 bucket. The key is used to access 2 TB of sensitive data that is stored in the S3 bucket.

A security engineer discovers a potential vulnerability on the EC2 instance that could result in the compromise of the sensitive data. Due to other critical operations, the security engineer cannot immediately shut down the EC2 instance for vulnerability patching.

What is the FASTEST way to prevent the sensitive data from being exposed?

- A. Download the data from the existing S3 bucket to a new EC2 instanc

- B. Then delete the data from the S3 bucke

- C. Re-encrypt the data with a client-based ke

- D. Upload the data to a new S3 bucket.

- E. Block access to the public range of S3 endpoint IP addresses by using a host-based firewal

- F. Ensure that internet-bound traffic from the affected EC2 instance is routed through the host-based firewall.

- G. Revoke the IAM role's active session permission

- H. Update the S3 bucket policy to deny access to the IAM rol

- I. Remove the IAM role from the EC2 instance profile.

- J. Disable the current ke

- K. Create a new KMS key that the IAM role does not have access to, and re-encrypt all the data with the new ke

- L. Schedule the compromised key for deletion.

Answer: D

NEW QUESTION 16

A company has deployed servers on Amazon EC2 instances in a VPC. External vendors access these servers over the internet. Recently, the company deployed a new application on EC2 instances in a new CIDR range. The company needs to make the application available to the vendors.

A security engineer verified that the associated security groups and network ACLs are allowing the required ports in the inbound diction. However, the vendors cannot connect to the application.

Which solution will provide the vendors access to the application?

- A. Modify the security group that is associated with the EC2 instances to have the same outbound rules asinbound rules.

- B. Modify the network ACL that is associated with the CIDR range to allow outbound traffic to ephemeral ports.

- C. Modify the inbound rules on the internet gateway to allow the required ports.

- D. Modify the network ACL that is associated with the CIDR range to have the same outbound rules as inbound rules.

Answer: B

Explanation:

The correct answer is B. Modify the network ACL that is associated with the CIDR range to allow outbound traffic to ephemeral ports.

This answer is correct because network ACLs are stateless, which means that they do not automatically allow return traffic for inbound connections. Therefore, the network ACL that is associated with the CIDR range of the new application must have outbound rules that allow traffic to ephemeral ports, which are the temporary ports used by the vendors’ machines to communicate with the application servers. Ephemeral ports are typically in the range of 1024-655351. If the network ACL does not have such rules, the vendors will not be able to connect to the application.

The other options are incorrect because: A. Modifying the security group that is associated with the EC2 instances to have the same outbound rules as inbound rules is not a solution, because security groups are stateful, which means that they automatically allow return traffic for inbound connections. Therefore, there is no need to add outbound rules to the security group for the vendors to access the application2.

A. Modifying the security group that is associated with the EC2 instances to have the same outbound rules as inbound rules is not a solution, because security groups are stateful, which means that they automatically allow return traffic for inbound connections. Therefore, there is no need to add outbound rules to the security group for the vendors to access the application2. C. Modifying the inbound rules on the internet gateway to allow the required ports is not a solution, because internet gateways do not have inbound or outbound rules. Internet gateways are VPC components that enable communication between instances in a VPC and the internet. They do not filter traffic based on ports or protocols3.

C. Modifying the inbound rules on the internet gateway to allow the required ports is not a solution, because internet gateways do not have inbound or outbound rules. Internet gateways are VPC components that enable communication between instances in a VPC and the internet. They do not filter traffic based on ports or protocols3. D. Modifying the network ACL that is associated with the CIDR range to have the same outbound rules as inbound rules is not a solution, because it does not address the issue of ephemeral ports. The outbound rules of the network ACL must match the ephemeral port range of the vendors’ machines, not necessarily the inbound rules of the network ACL4.

D. Modifying the network ACL that is associated with the CIDR range to have the same outbound rules as inbound rules is not a solution, because it does not address the issue of ephemeral ports. The outbound rules of the network ACL must match the ephemeral port range of the vendors’ machines, not necessarily the inbound rules of the network ACL4.

References:

1: Ephemeral port - Wikipedia 2: Security groups for your VPC - Amazon Virtual Private Cloud 3: Internet gateways - Amazon Virtual Private Cloud 4: Network ACLs - Amazon Virtual Private Cloud

NEW QUESTION 17

A company is migrating one of its legacy systems from an on-premises data center to AWS. The application server will run on AWS, but the database must remain in the on-premises data center for compliance reasons. The database is sensitive to network latency. Additionally, the data that travels between the on-premises data center and AWS must have IPsec encryption.

Which combination of AWS solutions will meet these requirements? (Choose two.)

- A. AWS Site-to-Site VPN

- B. AWS Direct Connect

- C. AWS VPN CloudHub

- D. VPC peering

- E. NAT gateway

Answer: AB

Explanation:

The correct combination of AWS solutions that will meet these requirements is A. AWS Site-to-Site VPN and B. AWS Direct Connect.

* A. AWS Site-to-Site VPN is a service that allows you to securely connect your on-premises data center to your AWS VPC over the internet using IPsec encryption. This solution meets the requirement of encrypting the data in transit between the on-premises data center and AWS.

* B. AWS Direct Connect is a service that allows you to establish a dedicated network connection between your on-premises data center and your AWS VPC. This solution meets the requirement of reducing network latency between the on-premises data center and AWS.

* C. AWS VPN CloudHub is a service that allows you to connect multiple VPN connections from different locations to the same virtual private gateway in your AWS VPC. This solution is not relevant for this scenario, as there is only one on-premises data center involved.

* D. VPC peering is a service that allows you to connect two or more VPCs in the same or different regions using private IP addresses. This solution does not meet the requirement of connecting an on-premises data center to AWS, as it only works for VPCs.

* E. NAT gateway is a service that allows you to enable internet access for instances in a private subnet in your AWS VPC. This solution does not meet the requirement of connecting an on-premises data center to AWS, as it only works for outbound traffic from your VPC.

NEW QUESTION 18

A company plans to create individual child accounts within an existing organization in IAM Organizations for each of its DevOps teams. IAM CloudTrail has been enabled and configured on all accounts to write audit logs to an Amazon S3 bucket in a centralized IAM account. A security engineer needs to ensure that DevOps team members are unable to modify or disable this configuration.

How can the security engineer meet these requirements?

- A. Create an IAM policy that prohibits changes to the specific CloudTrail trail and apply the policy to the IAM account root user.

- B. Create an S3 bucket policy in the specified destination account for the CloudTrail trail that prohibits configuration changes from the IAM account root user in the source account.

- C. Create an SCP that prohibits changes to the specific CloudTrail trail and apply the SCP to the appropriate organizational unit or account in Organizations.

- D. Create an IAM policy that prohibits changes to the specific CloudTrail trail and apply the policy to a new IAM grou

- E. Have team members use individual IAM accounts that are members of the new IAM group.

Answer: D

NEW QUESTION 19

A company's security engineer is designing an isolation procedure for Amazon EC2 instances as part of an incident response plan. The security engineer needs to isolate a target instance to block any traffic to and from the target instance, except for traffic from the company's forensics team. Each of the company's EC2 instances has its own dedicated security group. The EC2 instances are deployed in subnets of a VPC. A subnet can contain multiple instances.

The security engineer is testing the procedure for EC2 isolation and opens an SSH session to the target instance. The procedure starts to simulate access to the target instance by an attacker. The security engineer removes the existing security group rules and adds security group rules to give the forensics team access to the target instance on port 22.

After these changes, the security engineer notices that the SSH connection is still active and usable. When the security engineer runs a ping command to the public IP address of the target instance, the ping command is blocked.

What should the security engineer do to isolate the target instance?

- A. Add an inbound rule to the security group to allow traffic from 0.0.0.0/0 for all port

- B. Add an outbound rule to the security group to allow traffic to 0.0.0.0/0 for all port

- C. Then immediately delete these rules.

- D. Remove the port 22 security group rul

- E. Attach an instance role policy that allows AWS Systems Manager Session Manager connections so that the forensics team can access the target instance.

- F. Create a network ACL that is associated with the target instance's subne

- G. Add a rule at the top of the inbound rule set to deny all traffic from 0.0.0.0/0. Add a rule at the top of the outbound rule set to deny all traffic to 0.0.0.0/0.

- H. Create an AWS Systems Manager document that adds a host-level firewall rule to block all inbound traffic and outbound traffi

- I. Run the document on the target instance.

Answer: C

NEW QUESTION 20

......

P.S. Dumps-hub.com now are offering 100% pass ensure AWS-Certified-Security-Specialty dumps! All AWS-Certified-Security-Specialty exam questions have been updated with correct answers: https://www.dumps-hub.com/AWS-Certified-Security-Specialty-dumps.html (372 New Questions)